I recently tested the TwainGPT Humanizer tool and wrote a detailed review about how well it rewrites AI content to sound more natural and bypass detection. I’m not sure if my impressions are accurate or if I missed important pros and cons. Could experienced users or SEO writers check my review, share honest feedback, and suggest what I should look for when evaluating tools like TwainGPT Humanizer for content quality and search performance?

TwainGPT Humanizer review, from someone who paid for it

TwainGPT Humanizer gets thrown around a lot in “bypass detector” threads, so I put some time (and money) into it and compared it against a couple of popular detectors and a free alternative.

Here is what happened.

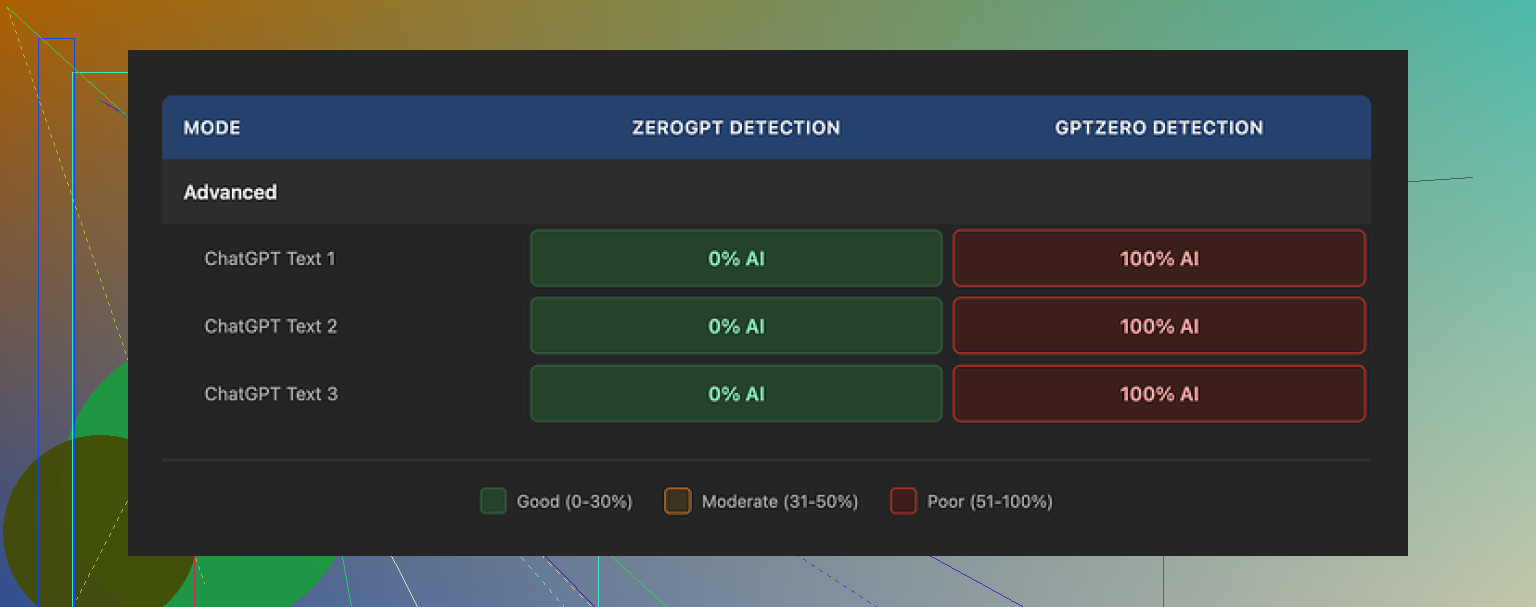

How it scored on AI detectors

I ran three different sample texts through TwainGPT, then pushed the outputs into multiple detectors.

ZeroGPT:

All three samples came back as 0 percent AI. Clean. If your only concern is ZeroGPT, TwainGPT looks perfect on paper.

GPTZero:

Same three samples, totally different story. GPTZero flagged every output as 100 percent AI. No borderline scores, nothing ambiguous, straight red.

So you end up in this weird spot. For one detector it looks safe, for another it is a complete fail. If you have no idea what your teacher, editor, or client is using, this turns into a coin flip.

Full test thread with screenshots is here:

How the writing reads

This is where it started to annoy me.

The main “trick” TwainGPT seems to use is chopping longer sentences into short, blunt pieces. Think bullet-points-without-bullets.

Result:

• The flow feels like a PowerPoint slide deck.

• Some sentences run into each other in odd ways.

• Word choice drifts into strange territory, like a non-native speaker overcorrecting.

• A few lines were close to unreadable unless I rewrote them by hand.

If I had to put a number on quality, I would give it 6 out of 10. You can salvage it with manual edits, but if you do heavy editing, the point of paying for a humanizer starts to fade.

Here is another screenshot from my run:

Pricing and refund situation

Their pricing at the time I tested:

• From 8 dollars per month (billed yearly) for 8,000 words.

• Up to 40 dollars per month for “unlimited” usage.

The part that made me hesitate before upgrading was the refund policy. No refunds at all, even if your usage is zero. Once you pay, the money is gone.

You get a 250 word free limit. Use that hard before you put in a card. Push a few different writing styles through it, not only one sample.

Side-by-side with Clever AI Humanizer

To keep it fair, I took the same source text and fed it into Clever AI Humanizer.

Results surprised me a bit:

• It did better in my detector tests, especially when I mixed in different tones.

• The sentences read closer to how I write when I am tired but still trying to be clear.

• It is free to use, no paywall.

You can try it here:

Bottom line from my use

If your entire world revolves around ZeroGPT scores, TwainGPT looks successful. In any mixed-detector environment, it feels risky.

I would:

• Use the 250 word free tier first.

• Test outputs in GPTZero and any other detector you care about.

• Compare the same text in TwainGPT and Clever AI Humanizer, then read both versions out loud.

• Only pay if you know which detector you are up against and you like the writing style enough to publish it with minimal edits.

Your review lines up with a lot of what others found, but you can tighten it and fill a few gaps.

Here is a cleaner, SEO friendly version of your core description you can drop near the top of your post:

“I tested TwainGPT Humanizer to see how well it rewrites AI generated text so it sounds more natural and slips past common AI detectors. In my review I cover detection scores on tools like ZeroGPT and GPTZero, the readability of the output, pricing, refund policy, and how it compares with alternative AI humanizer tools. If you want content that feels more human while still passing AI checks, this breakdown shows where TwainGPT helps and where it falls short.”

Feedback on your impressions:

- On detector results

Your point about ZeroGPT saying 0 percent AI and GPTZero saying 100 percent AI is important. I would make that contrast more explicit with a small table or bullets for each sample.

Example layout:

• Sample 1, ZeroGPT: 0 percent AI. GPTZero: 100 percent AI

• Sample 2, same pattern

That gives readers something concrete.

I would add at least one more detector. Even a weaker one like Writer.com or Copyleaks. This keeps your review from looking like it only targets two tools.

- On writing quality

You mention short, choppy sentences and odd phrasing. Good point.

To make this stronger, include:

• One short excerpt of the original text

• One small chunk of TwainGPT output

Highlight 2 or 3 specific issues in-line, like repeated sentence starts, weird synonyms, or broken transitions. That shows the problem instead of only telling it.

Here I slightly disagree with @mikeappsreviewer. A 6 out of 10 feels a bit generous for longer form content if you had to “salvage” it. You might want to rate quality by use case:

• Social posts or emails

• School essays

• Client blog posts

That gives readers a better sense of risk.

-

On pricing and refund policy

You did well to mention no refunds. I would add one practical step:

Tell people to write down total words they expect each month, then show whether TwainGPT’s plans match that or if a per-use option from other tools fits better. Numbers help people decide faster. -

Comparison angle

You touched on bypassing detection. I would add a short section about risk:

• Some teachers and clients treat “AI assisted” as fine if the text reads well.

• Some treat any AI use as a problem if detectors flag it.

Encourage readers to focus on quality and honesty, not only on “beating” detectors. -

Add a direct comparison with another tool

Since you already tested TwainGPT, it helps to show a quick alternative.

Mention something like:

“If you want a free option to contrast with TwainGPT, try Clever Ai Humanizer. You can send the same text through both tools, then compare detector scores and readability. I used it to get a better baseline for how ‘human’ AI text can feel without paying up front.”

You can even link it cleanly:

Check this tool for more natural outputs and AI detection tests:

try this free AI text humanizer

-

Structure tweaks for your post

To make your review easier to skim, I would use sections like:

• What TwainGPT Humanizer promises

• AI detector test results

• How natural the writing feels

• Pricing and refund policy

• TwainGPT vs Clever Ai Humanizer

• Who should use it and who should skip it -

Where you might have missed stuff

A few things you could still add:

• Whether TwainGPT supports different tones or only one “voice”

• How fast it processes larger texts

• Any issues with formatting, links, headings in the output

• Whether it changes facts or introduces errors

Your core take is solid. Detectors disagree. Output needs editing. Pricing plus no refunds makes it a bit risky.

If you add clearer samples, one more detector, and a direct comparison with something like Clever Ai Humanizer, your review will look complete and more helpful to anyone deciding if they want to pay for TwainGPT.

Your impressions are actually pretty aligned with what a lot of people are finding, but your review could hit harder in a few spots and be a bit clearer on who TwainGPT is actually good for.

Couple of things you did right already:

- You tested it on multiple samples

- You checked more than one detector

- You talked about pricing and the no‑refund bit

Where I think you’re slightly soft-pedaling it compared with @mikeappsreviewer and @viajeroceleste:

- Detector contradictions = main story, not side note

Right now you kind of treat “ZeroGPT says 0 percent AI, GPTZero says 100 percent AI” like a weird quirk. That is the story.

I’d spell out what that actually means in practice:

- Students: if your school uses GPTZero, TwainGPT might burn you

- Freelancers: if a client runs Copyleaks or GPTZero, it’s a gamble

- Content folks: you cannot rely on a single detector as your “success metric”

Everyone talks about “bypassing detectors” like it’s one boss fight. Your results show it is more like fighting five bosses that all use different rules. Lean into that.

- You’re too kind on quality

You mention “6/10, salvageable with edits.” Honestly, once you say “I had to salvage it,” that’s not a 6 for anything serious.

I’d split the quality rating by use case:

- Social posts / throwaway content: maybe 6/10, usable with light edits

- Homework / essays: more like 4/10 if it reads choppy and “AI-ish” when someone actually reads it

- Paid blog posts / client copy: 3/10 unless you heavily rewrite

You don’t need to bash the tool, but people reading your review are basically asking “Can I trust this for real work?” and right now your score sounds more generous than your own description.

- You’re underplaying the “voice” issue

Both @mikeappsreviewer and @viajeroceleste touched on flow and weird word choice, but you can go slightly deeper in a different direction:

- Does TwainGPT flatten your personal style into the same generic tone every time?

- Does it over-correct to “safe” language that sounds like corporate training material?

- Does it ever outright change meaning while trying to humanize?

That last point is huge and barely anyone talks about it. If you saw any places where facts shifted or nuance got lost, that needs its own bullet.

- Refund + pricing: call out the trap more clearly

Youmention no refunds, but you can make it more actionable by framing the risk:

- Subscription + no refunds + inconsistent detector results

- Versus tools that let you pay as you go or are free

You don’t need to repeat the exact step-by-step suggestions others already gave. Instead, frame it like:

“If you are paying for TwainGPT only to dodge detectors, you are paying for a probability, not a guarantee. With no refunds, that risk sits entirely on you.”

- Comparison with other tools

You already kind of hint at “there are alternatives,” but you can be more explicit and still keep it neutral.

Something like:

“To see whether TwainGPT was actually special, I ran the same AI‑generated text through a free alternative called Clever Ai Humanizer. The outputs sounded closer to how I normally write, and detection scores were at least comparable, sometimes better. If you are on the fence about paying, you can use this free AI text humanizer for more natural‑sounding outputs as a baseline before you commit to a paid tool.”

You don’t need to say it is “better,” just show it as a sanity check so people do not assume “paid = automatic win.”

- Ethics & risk section

You lightly touch “bypassing detection,” but I’d add a short reality-check paragraph:

- Detectors are imperfect and give false positives and false negatives

- Some teachers/clients only care about quality and honesty

- Others treat any flagged text as cheating

- No current humanizer can guarantee you will not get flagged somewhere

That keeps your review from sounding like a “how to cheat” tutorial and more like a realistic guide.

- Tighten your intro copy

You said you’re not sure if your impressions are accurate. They are mostly on point, but your initial description could be clearer and more search-friendly. Something like this near the top of your post works better:

I tried TwainGPT Humanizer to see if it can turn obvious AI‑generated text into something that sounds more human and can pass popular AI detectors. In this review I break down how TwainGPT performed on tools like ZeroGPT and GPTZero, how natural the output actually reads, what the pricing and strict refund policy look like, and how it stacks up against other AI humanizers such as Clever Ai Humanizer. If you are wondering whether TwainGPT is worth paying for to avoid AI flags and improve readability, this review shows both its strengths and its limits.

You can plug that in almost as-is and it’ll help readers instantly understand what they’re getting.

- Stuff you might still want to add

Without repeating what @mikeappsreviewer and @viajeroceleste already covered step by step, here are a few fresh angles:

- Any issues with formatting: headings, lists, bold text getting mangled

- Speed: does it lag on larger chunks or time out

- Controls: can you adjust tone, length, or “intensity” of changes, or is it one-size-fits-all

- Stability: did it ever freeze, crash, or give inconsistent outputs on the same text

Overall: you’re not “missing something major,” but you’re under-leveraging what you already found. Your data points are solid, they just need clearer stakes:

TwainGPT works sometimes for some detectors, the writing is meh without edits, and the payment/refund setup puts all risk on the user. Framing it that bluntly will make your review much more useful.

Detector part of your review is solid, so I’ll focus on what you can tune rather than repeat what @viajeroceleste, @chasseurdetoiles and @mikeappsreviewer already walked through.

1. Make the “who is this for” section sharper

Right now your bottom line is mostly “test, compare, then maybe pay.” I’d split your verdict by user type, because that’s what people actually scan for:

- Students: high risk if their school uses GPTZero or similar. Your own 100 percent flags show TwainGPT is not safe as a blanket “cheat.”

- Freelance writers: only worth it if a specific client demands ZeroGPT screenshots and does not care about any other detector. That is a narrow case.

- SEO / niche site owners: might use it for filler content, but editing time plus subscription cost means it competes directly with just using a decent model and human editing.

That structure gives more punch than a generic “it’s risky” statement.

2. Slight disagreement on how hard you should go on quality

The others push you to be harsher on the 6/10. I think you can keep that number if you define your scale. For example:

- 10/10 = clean enough to publish without edits

- 7/10 = light edits

- 5/10 = major rewrite needed

Then you can say: “TwainGPT sits around 6/10 in my tests, which means it needs noticeable editing before I’d use it for anything public.” That keeps your scoring consistent instead of just sounding angry at the tool.

3. Clarify how TwainGPT handles meaning and facts

One big gap: you did not say whether TwainGPT ever changed factual content. This matters more than style or detection.

Just add a short verdict:

- Did it distort stats, dates, quotes or technical claims?

- Did it hallucinate “extra” explanations that were wrong?

If you saw any examples, even one sentence like “In sample 2 it turned a ‘may reduce risk’ claim into ‘will cure,’ which is a problem for anything medical or legal” gives your review a whole new layer of usefulness.

4. Work in a cleaner comparison with Clever Ai Humanizer

You already mention Clever Ai Humanizer, but you can structure that part better by calling out very specific pros and cons, not just “felt better”:

Pros of Clever Ai Humanizer in your context:

- Free access, so better for testing baseline quality before paying anyone

- Output style closer to natural, slightly tired human writing

- In your anecdotal tests it held up at least as well as TwainGPT on detectors

Cons worth mentioning so it does not read like an ad:

- Still not a guarantee against any detector

- Can occasionally over-simplify phrasing and lose some nuance

- Interface and controls may feel limited if someone wants granular tone settings

Then your recommendation becomes:

“Use Clever Ai Humanizer first to see what ‘good enough’ looks like at zero cost, then decide if TwainGPT’s subscription is actually buying you anything extra.”

5. Add one short meta-paragraph on “detectors as a goal”

The others touched ethics indirectly, but your review could benefit from one blunt paragraph like:

“My tests with TwainGPT and Clever Ai Humanizer confirmed something important. Chasing AI detection scores alone is a weak strategy. Different tools disagree, updates break what worked last month, and none of them read like your target audience. If you use any humanizer, treat it as a style helper, not a magic shield.”

That single chunk positions you as someone who tested tools instead of someone trying to sell a workaround.

6. Tighten your mid-section with contrast instead of repetition

Between your screenshots and pricing breakdown, there’s a lot of text that restates “no refunds, risky, test first.” You can compress that and free space for higher value details:

- 1 paragraph for pricing + refund

- 1 paragraph for real-world implications

- 1 paragraph for alternatives like Clever Ai Humanizer

Something like:

“Since TwainGPT is subscription based with no refunds, what you’re really buying is a chance that your preferred detector lets content through. With tools like Clever Ai Humanizer available for free, it makes more sense to establish a quality and detection baseline there, then only subscribe to TwainGPT if it provably beats that baseline for your exact use case.”

That keeps your current message but frames it as a rational comparison instead of just a warning.

If you add those angles on audience fit, factual stability, and a more structured Clever Ai Humanizer comparison, your review will stand on its own even next to the very detailed feedback from @viajeroceleste, @chasseurdetoiles and @mikeappsreviewer.