I recently submitted some work and it was flagged by a ChatGPT detector for being AI-generated, but I wrote it myself. Has anyone else experienced this? I need advice on what I can do to prove my work is original and understand how reliable these detectors really are.

Yeah, this happens more than people like to admit. AI content detectors, especially the ones trying to pin down ChatGPT-generated work, are honestly all over the place accuracy-wise. They often flag perfectly human writing because their algorithms look for patterns like certain phrase structures, formality, or even just lack of errors—which ironically, many careful students or pros display.

I’ve run into this issue for my own stuff; the detector said my personal reflection was 90% AI, which was kind of hilarious since it was literally about my dog chewing my laptop charger (doubt ChatGPT would bother with that level of ridiculous detail). The reality is, these detectors are far from perfect and can’t “prove” anything definitively. They’re more like rough guessers right now.

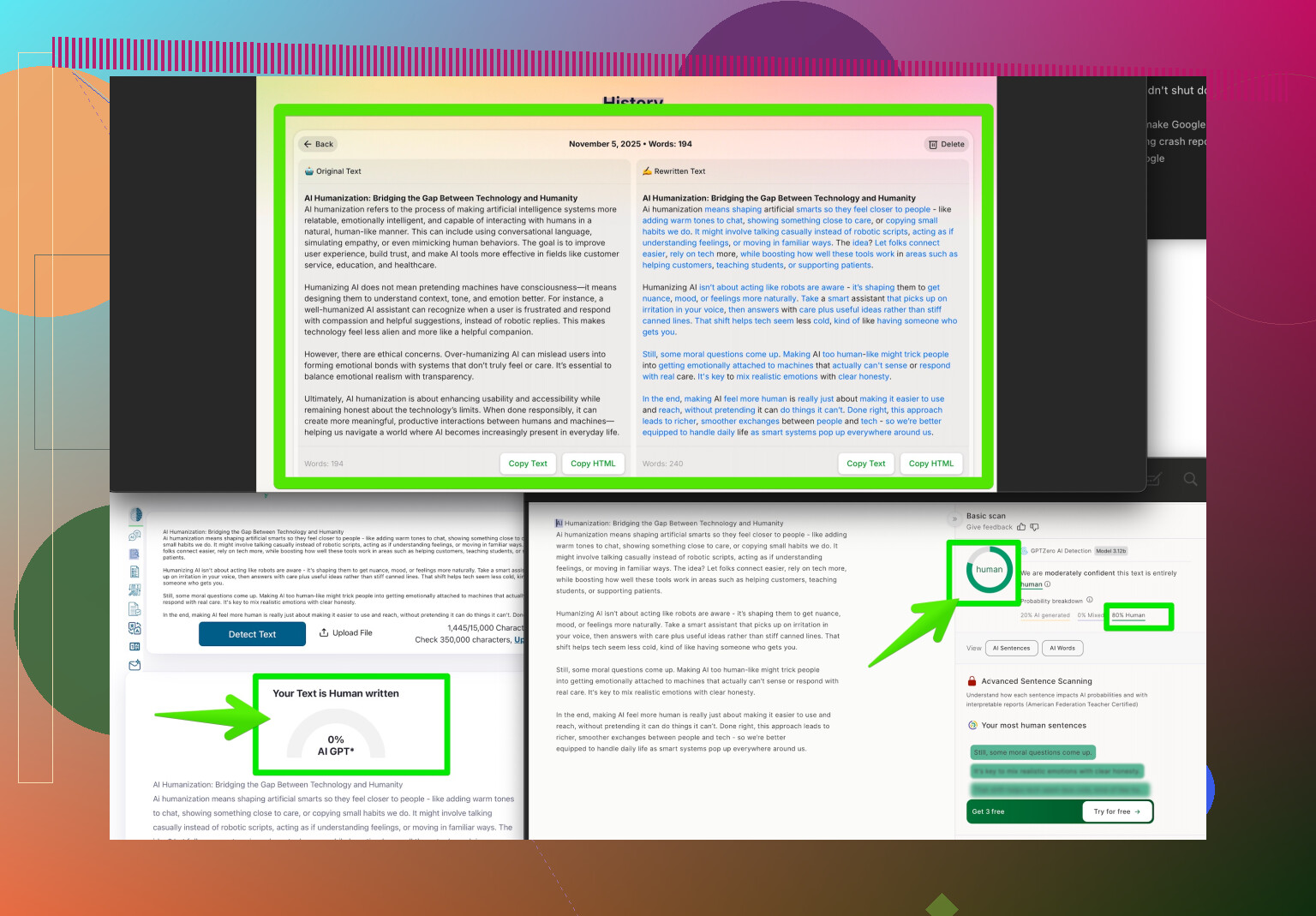

If you need to prove your work is original, save your drafts and edits, or use version history if you’re working in Google Docs. Some schools and clients get convinced if you show the progression of your writing. Also, sending in your brainstorming or outlines can help. If you need to get past detection for whatever reason (though you shouldn’t HAVE to for your own original work…), there are tools out there like the Clever AI Humanizer that are designed to make text seem even less AI-generated, just in case you want to give it a shot. Here’s one I’ve seen recommended: make your writing undetectable as AI-generated.

But honestly, the tech just isn’t good enough for anyone to use it as absolute proof. If they push, challenge them for more concrete evidence and maybe point out those tools to show how unreliable detectors can be. Anyone else had to fight a detector over your own work? It’s so frustrating.

Man, these AI content detectors are like mood rings for your essays—pretty to look at but absolutely useless as proof of anything solid. I totally get why @vrijheidsvogel called them “rough guessers,” but honestly, I’d go even further—they’re glorified coin-flippers with fancy graphs. Actual numbers? OpenAI’s own research once showed their AI-detecting tool tagged nearly half of student work as “likely AI” when it wasn’t. If you’re writing clear, structured, and typo-free (unlike me right now, lol), you’re basically painting a big ol’ AI bullseye on your back. And don’t even get me started on creative writing or personal stories—sometimes these bots still choke and say it’s “robotic.” Riiight.

To prove your work is original WITHOUT repeating all those draft/history tricks (which are solid btw), here’s what I’d try:

- Use plagiarism detectors instead. And proudly show the “zero plagiarism” result—that’s still considered more legit by teachers/clients than these sketchy AI detectors.

- If it’s a recurring problem, request a short “live” writing test or oral follow-up. Most people will realize instantly you’re not outsourcing to ChatGPT if you can talk/write with consistency.

- Copyright your material if it’s important or unique enough. Low-key flex but it can deter more serious accusations.

- Reference something obscure or personal only YOU could know (detectors can’t fact-check your grandma’s pie recipe).

- Try the Clever AI Humanizer if you’re forced to avoid the false flags, but honestly, ask: why should you jump through hoops for a machine’s mistake?

Hot take: The real problem is these detectors are being used as evidence in places they absolutely shouldn’t, especially when there’s money or grades on the line. They’re preliminary tools, nothing more. Defend yourself, get loud, and if you wanna deep dive, check out SEO-friendly guides like how Reddit users are humanizing AI writing—surprising how much crowd wisdom helps. Hang in there, you’re not alone in fighting the algorithm overlords.