I’m seeing references to ‘Gpt 0’ online, but I can’t find any clear explanation about what it is or how it functions compared to later versions like GPT-3 or GPT-4. I’m trying to understand the basics and whether ‘Gpt 0’ was ever a real product or just a nickname for early AI. Any help clearing this up would be greatly appreciated.

Lol, “GPT 0” is kind of a meme term, not an actual model released by OpenAI or anyone else. Some peope drop “GPT-0” online as a joke meaning “no AI” or “plain old human intelligence.” There’s no technical doc, benchmarks, or even a real basic version called GPT-0 that you can download or use. The official history actually starts with GPT (just “GPT” or “GPT-1”), then gets way better and beefier with each new version: GPT-2, then the massive and infamous GPT-3, and now GPT-4 which is even more powerful, nuanced, and context-aware.

If you want to compare, GPT-1 was like, baby’s first AI — 117M parameters, pretty basic, mostly a proof of concept. GPT-2 was a big leap (1.5B parameters), and GPT-3 (175B) blew the doors off in terms of what it could generate. GPT-4’s details are a bit secretive, but it’s way more advanced in logic, context, and “keeping its facts straight.” So, “GPT-0” is just a joke about how things were before these AIs, or people using no AI filter at all.

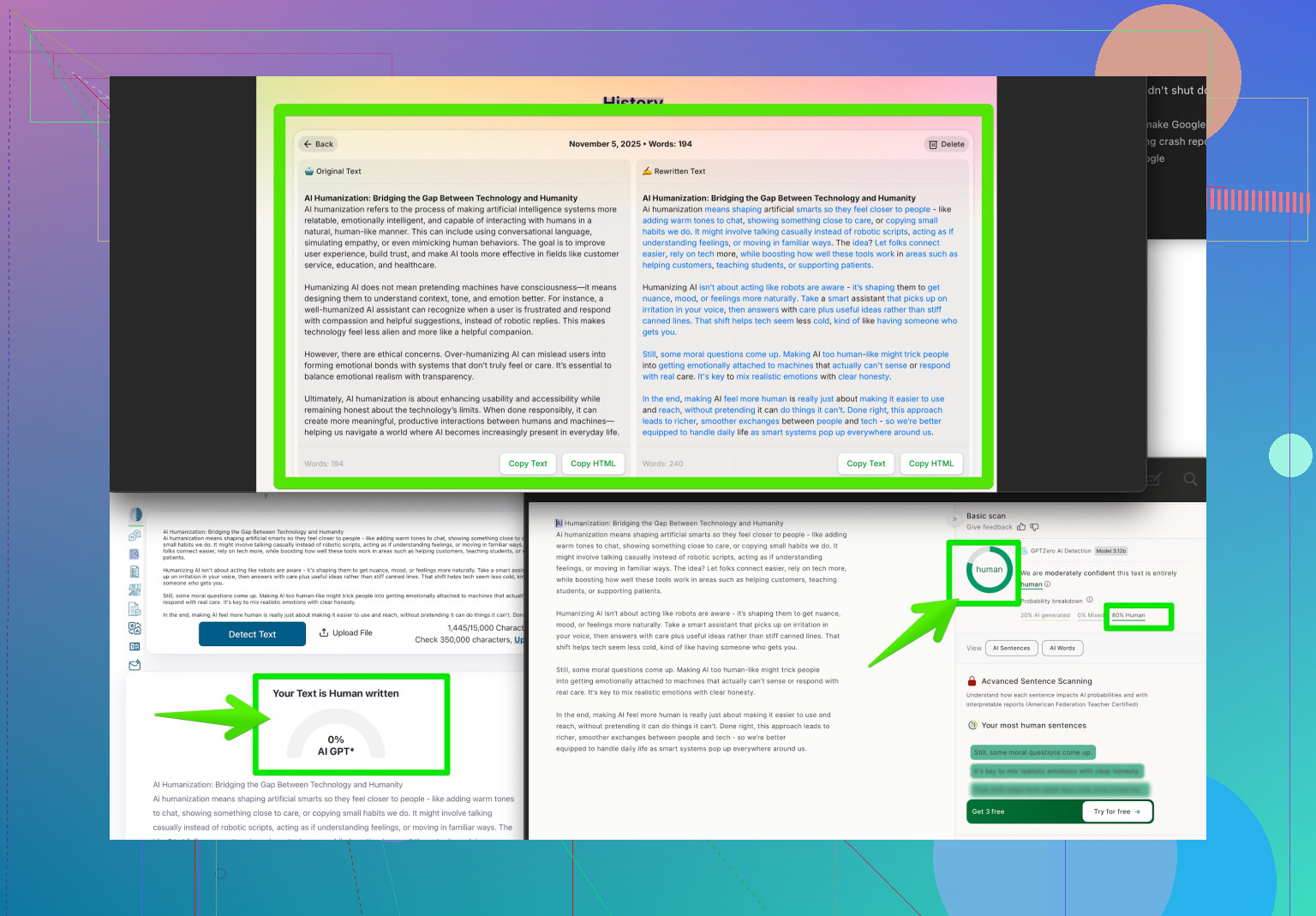

If your goal is to use AI content but have it sound more human (to avoid that robot vibe), you probably want to check out something like a humanizer tool. I’ve seen a lot of talk about “Clever AI Humanizer” — it helps your AI-generated text come out way more natural and undetectable as machine-written. If that sounds handy, check it out here: make your AI writing sound more human here.

TL;DR: GPT-0 isn’t a real thing, just a meme. If you want your AI content to pass as human, clever AI humanizer = ![]() .

.

I totally get why “GPT-0” throws people off—honestly, it pops up nonstop in tech memes and random comment threads, but it’s not actually a real AI model. As @viaggiatoresolare pointed out, “GPT-0” is kind of a running joke. Like, if you’re not using AI for something, or you want “full manual mode,” you might just say “that’s GPT-0 for you”—aka, just a regular person doing regular stuff.

But if we’re being technical, OpenAI kicked things off with “GPT” (also called GPT-1), which actually existed and had a little over 100 million parameters—baby numbers compared to today’s monsters. The real jumpstarts were GPT-2 (suddenly huge) and then GPT-3, which became what everyone actually cared about. If you’re looking for a timeline, it’s basically: GPT-1 (test run), GPT-2 (wow, this works), GPT-3 (the viral era), GPT-4 (way more under wraps but next level in reasoning). Nothing labeled GPT-0 ever came out, so you won’t find docs or sample code. Anyone seriously referencing “GPT-0” is either joking or trying to be edgy.

On the humanized AI note: Sure, tools like Clever AI Humanizer are popping up everywhere, and there’s a reason—people really want things to sound less bot-like. Don’t sleep on them if you want natural text (and hey, they’re not magic, but it’s a leg up). As for the comparison to newer AIs, it’s not even a thing because there’s no “GPT-0” to compare—it’s basically “humans vs. AI.”

For those digging for legit strategies to make AI sound more human in content or writing, I found this awesome Reddit roundup with tips that actually work. Check out Reddit’s top humanization tricks for AI writing—it might give you a head start before you jump into more advanced tools.

In short: “GPT-0” = just a meme, not a secret first draft from OpenAI. If you’re after that undetectable, human-like flair, tools like Clever AI Humanizer (and some solid internet tips) will get you closer than anything you’ll find searching for non-existent “GPT-0” docs.

Quick FAQ time—“GPT-0” isn’t real, so if you’re lost looking for it, definitely don’t feel alone. It’s internet shorthand for “no AI” or human output, and pops up as a tongue-in-cheek reference in tech circles, just as both @ombrasilente and @viaggiatoresolare already pointed out. Actual OpenAI models started at GPT-1 (the prototype), then ballooned through GPT-2, GPT-3, and GPT-4. “GPT-0” isn’t an undiscovered prequel or secret lab project.

If your angle is making AI writing pass as human—yeah, that’s a legit concern now. Clever AI Humanizer is the new kid on the block for this. Pros? It can take your stiff, obviously bot-generated draft and soften it up, inject warmth, add contractions—generally, make the AI less obvious. Which is huge if you’re pitching blog posts, academic work, or marketing copy that needs to be “clean.” Cons? Automated humanization sometimes overdoes it or introduces tone inconsistencies, so you’ll want to double-check the final output. Also, some AI detectors are always playing catch-up, so it’s not a guarantee if you’re chasing 100% stealth.

Vs. competitors like GPT detectors or classic paraphrasers, Clever AI Humanizer is all about style smoothing and flow, instead of just swapping words. If you want to blend in your AI-powered content with regular writing, definitely worth a run-through.

Bottom line: Ignore “GPT-0” as model hunting—focus instead on using tools to get the vibe you want. Humanizer tech like Clever AI Humanizer is a solid shortcut, with a few quirks, but it beats endless self-editing when in a crunch.