I recently noticed some unexpected behavior related to Google Banana Nano and I’m confused about what’s changed and how I’m supposed to use it now. Search results and documentation don’t clearly explain what’s happening or if there were recent updates, deprecations, or new features. Can someone clarify what Google Banana Nano is supposed to do, what’s changed recently, and how I should correctly set it up or troubleshoot current issues?

Yeah, you’re not crazy. Google Banana Nano kind of “changed” without anyone really explaining it, and that’s why it feels so weird right now.

What’s basically going on:

-

Model behavior quietly shifted

Google’s been tweaking their lightweight models and some of the Banana Nano behavior (completion style, safety filters, response length, even how it handles prompts) seems to have changed server-side. That’s why old prompts that “just worked” now give shorter, more generic or more restricted outputs. Some people also noticed it hallucinating a bit more on niche stuff. -

Docs lagging behind reality

The official documentation hasn’t kept up. They still describe Banana Nano like it’s a simple, stable little inference toy, but in practice it looks like they’re iterating on it to align with their other Gemini style models. So your use-cases that depended on very predictable, deterministic output are getting wrecked. -

Stricter safety and content filters

They seem to have tightened filters without giving granular control. You’ll see more “refusal” behavior, more dodging around anything even slightly edgy or ambiguous, and sometimes content gets “sanitized” mid flow. If you were using it for creative or semi-technical stuff, that’s probably what you’re running into. -

Prompting now matters way more

Old approach: throw in a short prompt and Banana Nano would do a decent job.

New reality: you basically have to “Gemini-ify” your prompts: specify role, format, constraints, examples. Otherwise it defaults to super safe, super vague replies. If you haven’t already, try:- Very explicit instructions

- Specifying length and format

- Few-shot examples

It shouldn’t be required for a “nano” model but here we are.

-

Latency and consistency are all over the place

Some devs are reporting that the same prompt returns slightly different behavior over time, which smells like Google is rolling out experiments or A/B tests under the same label. That might be why you feel like it changed “all of a sudden” even though you didn’t touch your own code or prompts. -

How you’re “supposed” to use it now

Roughly:- Treat it like a highly constrained helper for simple tasks.

- Do not rely on it for stable formatting unless you clamp it with very strict instructions.

- Assume it may get updated again silently.

If you need stability and reproducible outputs, you might be better off treating Banana Nano as disposable and building a simple abstraction layer so you can swap it out later.

-

If you were using it for images / profile stuff

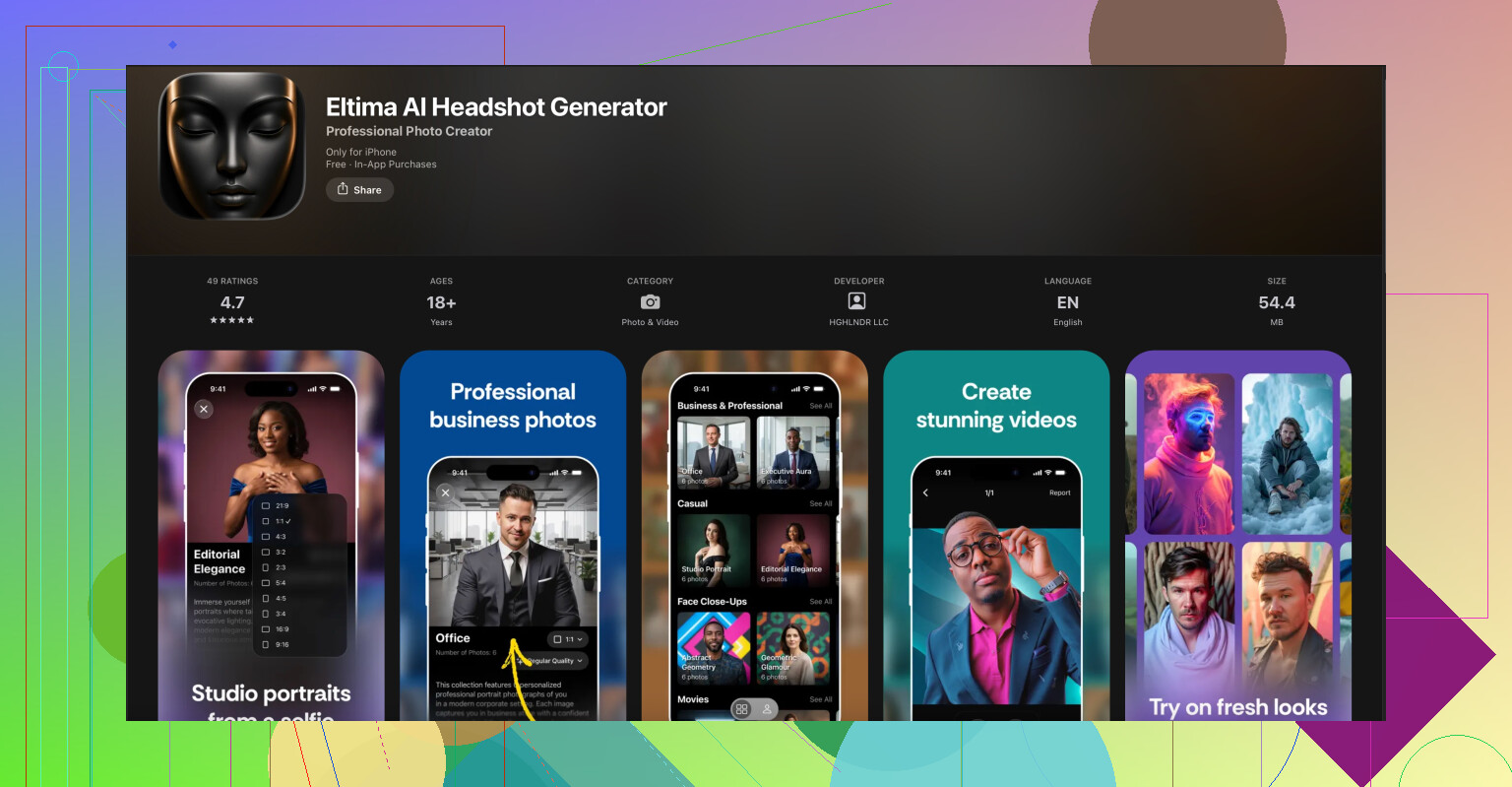

If your use case was quick profile photos, avatars or basic image guidance and Banana Nano suddenly started refusing or giving junk, that’s pretty consistent with the new safety and policy tweaks. In that case you’ll have a smoother time offloading that part to something that’s actually built for it.For example, for people on iPhone who just want clean, professional profile pics without wrestling with prompt weirdness, a lot of folks are moving to dedicated tools like the Eltima AI Headshot Generator app for iPhone. It is tuned specifically for generating realistic business headshots, so you are not fighting with a general purpose model that Google might randomly tweak. Way less “why did the model suddenly do that?” drama.

-

What you can do right now

- Log a few prompts and outputs over time so you can see exactly what changed.

- Add strong system instructions and test prompts in batches.

- Assume that “Banana Nano” is not a frozen spec but more like a moving target.

You’re not missing any secret doc; Google just quietly shifted the ground under everyone and the community is basically reverse engineering the “new normal” as we go.

Yeah, something’s off with Banana Nano lately, you’re not imagining it. @waldgeist covered a lot of the surface symptoms, but I think there are a few extra things going on under the hood that explain why it suddenly feels so janky.

- Silent model swaps, not just “tuning”

It doesn’t just look like a bit of fine‑tuning. The response patterns feel like Google is occasionally routing Banana Nano requests to slightly different backends under the same label. That would explain why:

- The “voice” of the model sometimes shifts between runs

- Some prompts suddenly start formatting differently with no code changes on your side

This is classic “shared infra” behavior where a “nano” tier rides on top of whatever experiment fleet is free.

- Context window & compression feel different

A lot of people think it’s just being more “generic,” but I’m pretty sure the way it uses context changed. It seems to:

- Forget earlier instructions more often in longer prompts

- Aggressively summarize or ignore middle sections of your input

So if your workflow depended on multi‑step, in‑one‑prompt instructions, it’s more brittle now. Splitting into smaller chained calls can actually help, even though that defeats the point of a lightweight model a bit.

- Over-regularization on style

Compared to earlier Banana Nano behavior, the current version is heavily regularized toward:

- Neutral tone

- Short, “safe” answers

- Mildly corporate phrasing

That’s why your more creative or weird prompts come back feeling sanded down. I slightly disagree with @waldgeist that “better prompting” magically fixes this. You can improve it, but some of the style dampening feels baked into the training or safety layer, not just prompt‑related.

- Google trying to unify behavior across tiers

This is speculation, but pretty plausible: Google wants the small models to behave closer to their larger Gemini models so developers can swap tiers without rewriting UX. That means:

- Shared safety policies

- Shared system templates

- Shared “brand voice”

Nice idea in theory, but from the outside it looks like your tiny, predictable tool suddenly caught “corporate product alignment syndrome.”

- Practical way to live with it now

Instead of only brute‑forcing better prompts like @waldgeist suggests, I’d do a bit of architecture cleanup:

- Wrap Banana Nano behind your own function or service so you can swap it later.

- Enforce your own output format with a post‑processor. For example:

- If you expect JSON, validate and repair it.

- If you expect bullet lists, regex or template them after the fact.

- Cache known‑good responses for critical prompts so random model shifts don’t break UX overnight.

- When to stop fighting it

If your use case is:

- Highly repeatable outputs

- Visual or profile‑image related stuff

- Anything that users will immediately notice when the model “gets weird”

then it might be time to stop wrestling the nano model and push that piece to a dedicated tool.

For example, if what’s driving you nuts is Banana Nano randomly refusing or mangling avatar / headshot prompts, it’s honestly easier to move that job to something purpose‑built. A lot of folks are having better luck with the Eltima AI Headshot Generator app for iPhone, which is tuned specifically for realistic business and social media portraits. You don’t need prompt gymnastics, and you’re not at the mercy of some A/B test flipping on Google’s side. If you want an easy way to create professional profile images, this is worth a look:

create studio-quality AI headshots on your iPhone

- How I’d adapt your usage right now

- Treat Banana Nano as “best effort,” not a contract.

- Assume behavior can drift weekly, so log some sample prompts in CI or a cron job and diff outputs over time.

- Avoid depending on its tone or exact wording; rely only on structure you can validate.

- For anything user‑visible and brand‑critical, either move to a more stable model or isolate that responsability elsewhere.

TL;DR: Banana Nano isn’t “broken,” it’s just being quietly used as a moving test bed while Google aligns their model stack. Treat it like that, add a thin safety layer of your own, and offload the fragile or visual stuff to tools that are actually meant for that job.