I’m considering using WriteHuman AI for writing and editing, but I’m unsure if it’s worth the cost or if there are better alternatives. Can anyone share real experiences, pros and cons, and how accurate or “human” the content actually feels so I don’t waste time and money on the wrong tool?

WriteHuman AI review from someone who paid for it

I tried WriteHuman from here:

I went in because they name GPTZero in their promo copy and talk a lot about “extensive testing”. So I took that literally and used GPTZero as the first check.

Here is what happened.

Testing against detectors

I used three different pieces of input text. All were long form, about 600–800 words each, and all were clearly AI written before humanization.

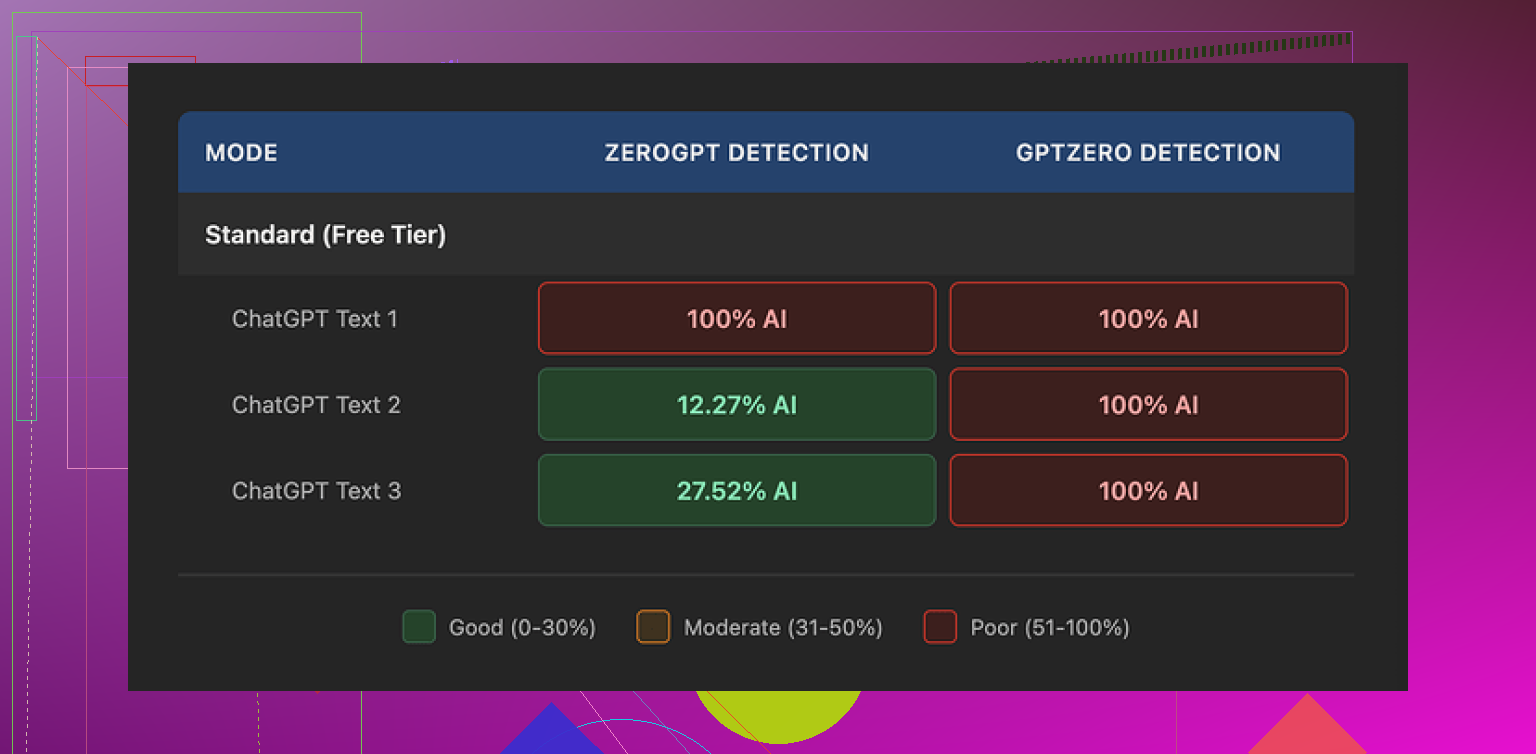

Tool: GPTZero

Every single WriteHuman output came back as 100% AI on GPTZero.

Not “mixed”, not “some sentences”. Full AI label on all three samples.

So when I saw “extensively tested against GPTZero” on their page and then saw 3 out of 3 fail, it felt off.

Tool: ZeroGPT

Results here bounced all over.

• Sample 1 output: 100% AI

• Sample 2 output: around 12% AI

• Sample 3 output: around 28% AI

So you might get something under the radar once, then get slammed on the next piece using the same settings. That randomness makes it hard to trust for anything important like client work or school submissions.

Write quality and weird tone swings

The writing itself felt off in a way I did not expect.

A few things I noticed:

• Tone swings

One paragraph would read like stiff corporate copy, then the next would sound casual and slightly messy, almost like two different writers stitched together.

For example, in one test blog post, the intro sounded like a marketing email, the body sounded like a rushed Reddit reply, then the last section went back to smooth neutral English. If you paste that into a client doc, anyone with decent reading sense will see it.

• Typos and odd phrasing

I saw “shfits” instead of “shifts” in one of the outputs. On one hand, small errors might help with evasion. On the other hand, if you are paying for a tool to fix AI text, then having to manually fix its typos defeats the point.

There were also a few odd transitions, like it would jump from one idea to the next without a logical bridge, which again smells like synthetic text trying too hard to be “human”.

So, yes, those quirks might help against some detectors, but they also make the text harder to use without editing. I had to touch up sentence flow, fix spelling, and smooth tone. At that stage, it is faster to do a manual rewrite.

Pricing and terms that made me flinch

Pricing at the time I tried it:

• Basic plan (annual): 12 USD per month with 80 requests

Higher tiers unlock an “Enhanced Model” and more tone settings.

A few issues here:

• Expensive for what you get

80 requests on a paid plan is not a lot if you process multiple drafts, or if you work with longer content pieces every week.

• No guarantee on detector bypass

Their own terms admit there is no guarantee it will bypass any detector, including the ones they name in marketing. That is honest, but once you add the next point, it hurts.

• No refunds

Strict no-refunds policy. So if you sign up, run your content, then see 100% AI on your detector of choice, that money is gone. You have to decide if you are fine with that risk.

• Data usage

Anything you submit is licensed for AI training. If you use private client docs, school work, internal company text, or anything you want to keep out of training data, this is a problem. There is no opt-out mentioned.

If that makes you uneasy, the only safe move is to avoid sending your content there.

What ended up working better for me

From direct testing with the same base texts, Clever AI Humanizer gave me better results on AI detection, especially on ZeroGPT and a couple of smaller detectors I tried.

On top of that, I did not hit a paywall in the same way, so I could experiment more without worrying about burning through a paid quota. For testing purposes, that helped a lot.

You can see my full test notes and screenshots here:

If you are thinking about paying for WriteHuman, here is the short version from my run with it:

• GPTZero: my samples were labeled 100% AI after humanization

• ZeroGPT: results inconsistent, from 12% to 100% AI

• Output: tone swings, at least one typo, needs manual cleanup

• Price: on the high side for 80 requests on the basic tier

• Policy: no refunds, your text used for training, no guarantee of bypass

If you want something “fire and forget” where you paste, click, and get safe text, this did not feel like that. I ended up fixing the text myself or using other tools instead.

I paid for WriteHuman a few weeks ago and used it on client content, so here is a straight breakdown.

My use case

• Long form blog posts from ChatGPT or Claude

• Needed cleaner tone and less “AI feel”

• Occasional school-type essays for friends

Pros

• Interface is simple. Paste text, pick tone, run.

• It sometimes improves flow when the original AI text is stiff.

• It introduces minor imperfections, which helps a bit on some weaker detectors.

Cons I hit

• On GPTZero, I had the same experience as @mikeappsreviewer. My tests still flagged as AI most of the time. For academic or high risk stuff, this is not comforting.

• Tone control felt inconsistent. One article sounded “LinkedIn corporate” in the intro, then “casual Reddit reply” in the middle, then neutral again. I had to manually rewrite sections to match.

• I also saw typos and wierd phrasing. It looked human in the bad way. If you want clean client content, you need to edit after.

• Pricing vs quota is rough if you work with long content multiple times per week. I burned through requests faster than I expected.

• No refund policy made me hesitate to upgrade beyond the starter tier.

• Data use for training is a red flag if you handle NDAs or student work.

How “human” it feels

For casual blog posts that do not need strong detection evasion, it is ok if you plan to edit.

For anything where an AI detector result matters, I would not rely only on this. Too much variance.

Where I slightly disagree with @mikeappsreviewer

The output was not useless for me. When I fed in my own rough human draft plus some AI paragraphs, WriteHuman sometimes smoothed them into a single voice better than expected. It struggled most when the entire thing started as pure AI.

Alternatives that worked better for me

-

Manual rewrite workflow

• Generate AI draft.

• Use it as notes.

• Rewrite in your own words, keep only structure.

This beats every detector in my tests and improves quality, but it takes time. -

Clever AI Humanizer

When I tested the same texts there, I saw lower AI percentages on tools like ZeroGPT and some smaller detectors. Not perfect, but more consistent. I still edited for tone, but I did not see the same amount of random typos. -

Mix tools

• Run text through Clever AI Humanizer.

• Then do a fast manual pass for tone and logic.

This gave me the best balance of speed, quality, and lower detection flags.

Who WriteHuman fits

• You want a quick “make this less robotic” pass.

• You are ok editing afterward.

• You do not care much about GPTZero specifically.

• You are not sending sensitive documents.

Who should skip it

• Students worried about AI checks.

• Agencies handling client work with strict policies.

• Anyone who needs predictable detector performance for each piece.

If you try it, start on the lowest tier, test with your own detector mix, and do not assume marketing claims equal safe output. For my workload, I ended up using Clever AI Humanizer plus manual editing and stopped renewing WriteHuman.

Short answer: if you’re on a budget or care about reliability, WriteHuman is pretty hard to justify right now.

I had a very similar experience to @mikeappsreviewer and @sterrenkijker, but I’ll focus on a few angles they didn’t hammer on.

- “Human feel” vs actually good writing

WriteHuman seems almost obsessed with “humanizing” in the sense of:

- sprinkle in small mistakes

- randomize tone

- break the pattern of clean AI prose

Thing is, “more human” is not automatically “better.” Some of the outputs I got were technically less detectable as AI on weaker tools, but also less usable:

- awkward transitions that made paragraphs feel stitched together

- weird word choices that sounded like a non-native speaker trying too hard

- voice drift when you feed it multiple sections from the same article

So yeah, it might “feel” more human to a detector sometimes, but it doesn’t really feel like a good writer. If I have to spend 15–20 minutes fixing tone and logic, I may as well rewrite from scratch.

- Detector reality check

I don’t rely on GPTZero alone, but it’s a decent stress test. My pattern was:

- GPTZero: usually still flags as AI, same as what others reported

- Some smaller detectors: occasionally better scores, but very inconsistent

- Sometimes a single sentence edit from me would help more than a full WriteHuman pass

To be fair, no tool can honestly guarantee bypassing everything, and I actually think their “no guarantee” clause is honest. But combine that with no refunds and the price, and it feels like they’re pricing it as if it did reliably bypass stuff.

- Cost vs what you actually get

What bugged me was not just “12 bucks for 80 requests,” but how quickly those requests evaporate if you:

- run multiple drafts of the same article

- tweak tone and have to re-run chunks

- split long content into smaller parts

It looks fine on paper until you start doing real workflows. Then suddenly you’re rationing clicks like it’s an MMO with paid energy refills.

- Data/privacy angle

The training-data clause is a dealbreaker if you:

- handle client contracts, internal docs, or anything confidential

- help students or colleagues with essays or research drafts

People gloss over this, but if you ever sign NDAs, sending that text to a tool that explicitly trains on it is… not great. I’d rather use something that lets me opt out or stick to local/manual editing.

- Where I slightly disagree with others

I don’t think WriteHuman is useless. For:

- casual blog posts

- social posts

- low-stakes content that just “sounds too ChatGPT”

it can nudge the tone in a more relaxed direction. I did get a few passes where the style felt more natural and less polished-robot. But that’s more of a “nice to have” polish tool, not the “shield against detectors” its marketing hints at.

- Alternatives that made more sense for me

Without repeating the full workflows already laid out:

-

Clever AI Humanizer

When I used the same base drafts, its outputs:- were more consistent in tone across whole pieces

- didn’t throw as many random typos

- performed a bit more predictably across multiple detectors

It still isn’t magic, but as a practical tool it felt more like “good editing assistant” and less like “lottery ticket vs detectors.” If you care about AI detection and SEO, having something like Clever AI Humanizer in your stack is honestly more sane than trying to brute-force everything with WriteHuman and hoping.

-

Light manual pass + any solid rewriting tool

One trick that helped more than WriteHuman:- run the text through a decent rewriter

- then do a quick 5–10 minute human clean-up, focusing on transitions and word choice

I consistently got better results for both quality and detection than a single WriteHuman pass.

- Who should actually pay for WriteHuman

Probably only worth it if:

- you write low-risk content

- you’re okay editing a lot afterward

- you don’t mind your text being used for training

- you specifically like its interface and tone presets

If you’re:

- a student worried about AI checks

- someone under client contracts

- trying to keep cost per article reasonable

then I’d skip it and either go with Clever AI Humanizer plus some manual polish, or just lean more on your own rewriting. WriteHuman feels like a niche tool pretending to be a full solution, and the price + no refunds + training clause makes it a pretty rough gamble.